This is a free weekly newsletter about the business of the technology industry. To receive Tanay’s Newsletter in your inbox, subscribe here for free:

Hi friends,

This week I’ll discuss OpenAI's recent Dev Day which included some major announcements that should continue to shake things up in AI.

I’ll discuss the main announcements and their implications.

Announcements

I. GPT-4 Turbo

The newly announced GPT-4 Turbo, boasts a number of improvements vs GPT-4:

128K context length which is a significant jump from 32K

Better cost (~3x cheaper) and latency (2x faster)

Improved instruction following and function calling

Implications:

More workloads: OpenAI announced that 92% for Fortune 500’s are already using OpenAI. Improved cost and latency should help move more of these into production. In addition, the longer context length closes a gap with Anthropic’s models, and should help OpenAI maintain/gain share vs them.

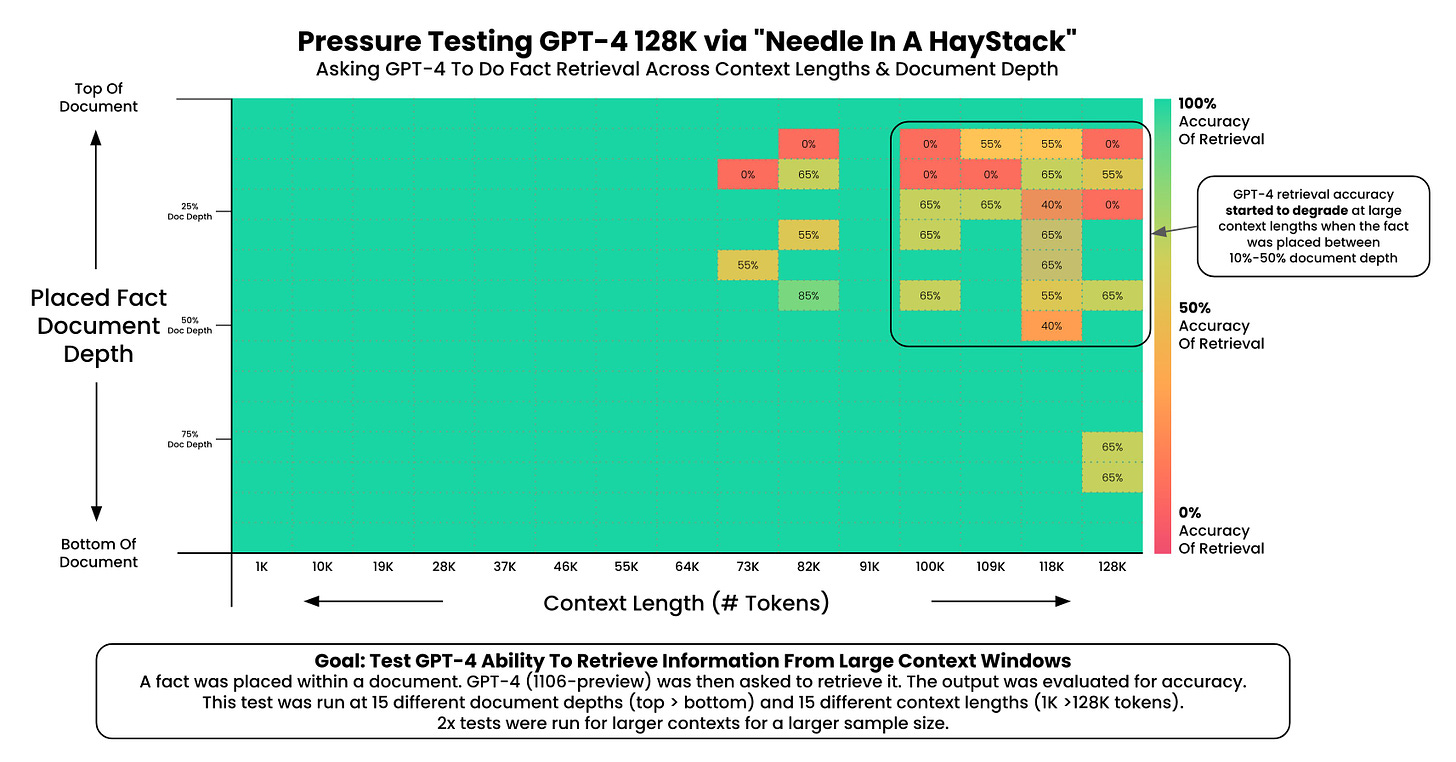

Long context windows: Longer context length closes a gap with Anthropic’s models, and should help OpenAI maintain/gain share vs them. They will also help replace techniques such as RAG for some use cases, though as the analysis below shows long context windows are not always a substitute for RAG even in smallish context lengths since accuracy tends to degrade as prompts get longer.

II. Multimodality in GPT-4

Multimodal was another big theme, particularly on the API side for developers. OpenAI announced that the following would be available via APIs in GPT-4 and independently:

Dall-E 3 for image generation

A new version of Whisper, v3, for speech-to-text

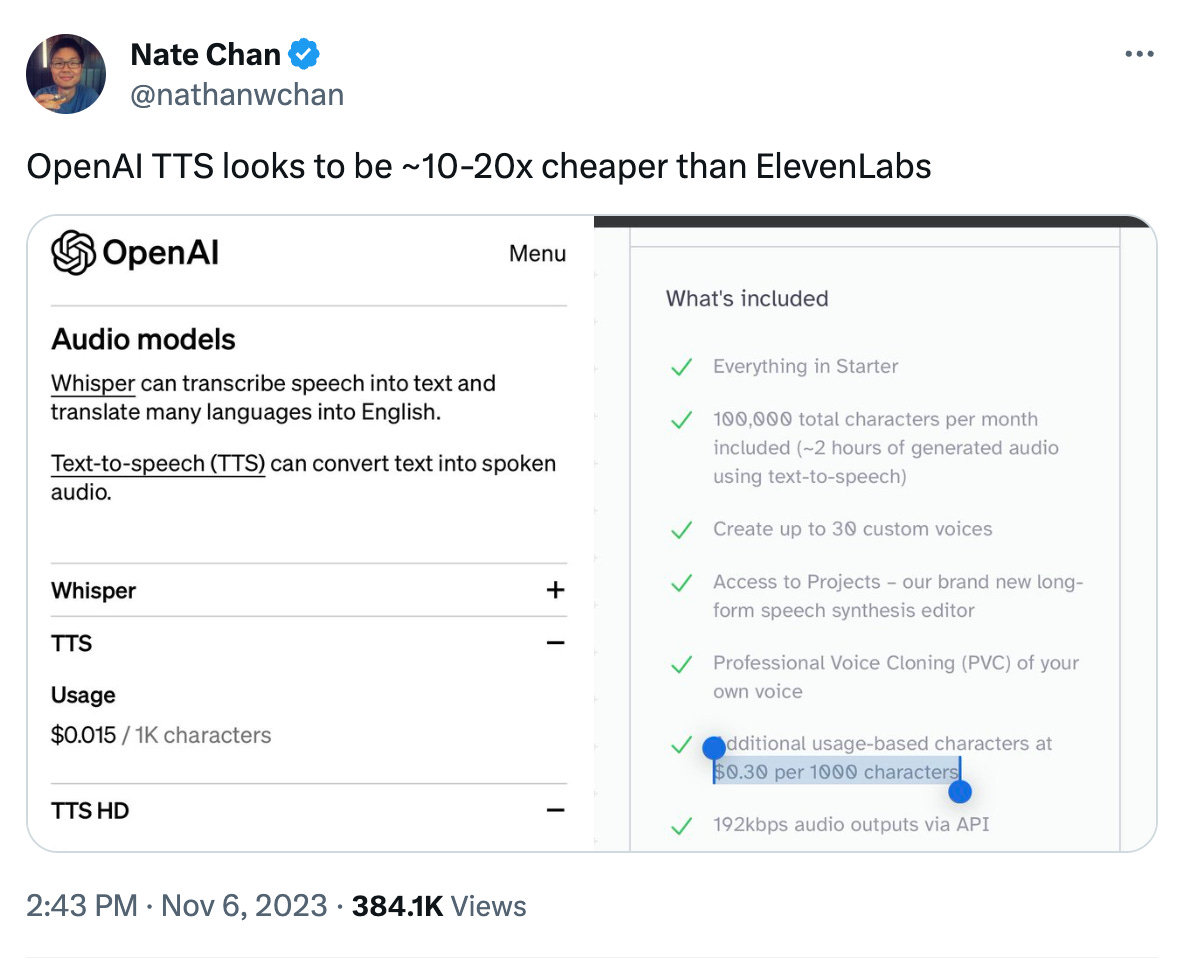

A text-to-speech API for the first time, which is competitively priced

A vision API for image understanding which was already starting to roll out

Implications:

Impact on other models: It’ll be interesting to see how domain specific models in each of these areas fare vs OpenAI’s bundled approach.

Multimodal applications: As AI's sensory capabilities continue to broaden and the state of the art continue to improve, it paves the way for novel applications of it which integrate the modalities.

III. The GPT Store

OpenAI also announced the ability for anyone to create their own “GPTs” which are basically custom mini GPTs with a specific prompt and knowledge base and tool access. These GPTs can then be listed on a GPT Store and shared with others and monetised.

A few things stand out:

Ease of creation: GPTs can be created via a no-code UI and by simply having a conversation. They allow you to upload files and give it additional knowledge, and also help craft the right prompts based on what you’re trying to achieve, and can access external APIs.

The Store itself: These can then be listed on a store and monetized over time, suggesting OpenAI’s ambitions of creating an App Store for AI and a central place where people go to to use AI in ChatGPT.

Implications:

A boom in indie entrepreneurship: While on the surface these tools shouldn’t be able to do too much more than GPT-4 itself, the ease of use should unlock a new level of creativity and also allow a new set of people to quickly make them to solve specific problems and the ability to monetise them should only further help. Based on a quick search, there are already 6,000 plus of these miniGPTs that are public.

The new app store and Interfaces of the future?: One interesting open question is what interface stuff gets done in the future. OpenAI is trying to make ChatGPT one of these superapp type interfaces where a lot of people go to, but priors learnings from Plugins indicated that people want ChatGPT where they get stuff done and not the other way around. So will ChatGPT and this store be a meaningful central hub or will the APIs that ChatGPT allows developers to build with provide this functionality in the existing apps people use? It’s hard to know for sure, but it seems like we’ll see a lot of the latter.

IV. Assistants API

OpenAI also announced an assistants AI, which provides additional functionality and simplifies some of the things developers were doing using other tools. In some ways, its the API version of the functionality made available for creating “GPTs” above.

It features:

Memory of threads and conversations with the chatbot

Native retrieval and handling of external files directly, allowing to easily add external knowledge to the model

Enhanced Code interpreter and function calling for using other tools made available as part of the API

Implications:

Impact on related tooling: While currently better suited to simple use cases given expensive pricing and lack of data security, the Assistants API's ease of use and native retrieval capabilities will make it easier to build AI apps using just OpenAI, and could pose a threat to Vector databases and programming frameworks. However, the initial launch left a bit to be desired, with issues around source documents being able to be downloaded by other users.

Building block towards agents: It’s also a building block towards agents through improved function calling and orchestration, though there’s still more to be done before agents are fully ready.

Closing Thoughts

OpenAI's Dev Day was had a vibrancy and buzz reminiscent of the excitement typically seen at Apple's WWDC keynotes. In closing, two overarching themes stand out about OpenAI’s direction, highlighting the company's strategic approach and future ambitions.

Vertical Integration Strategy: OpenAI's efforts in broadening the range of its model modalities, coupled with reductions in pricing and latency, reflect a strategic move towards vertical integration and getting more workloads into production. This includes extending into areas like storage, memory, and retrieval and also for some customers fine-tuning GPT-4 and custom models. This indicate a strong focus on capturing a larger share of value in delivering models to enterprise clients. The extent of this vertical integration and its impact on surrounding tooling will be important to watch.

Executing on End-user Ambitions in Parallel: Alongside its B2B/developer focused initiatives, OpenAI is also making significant strides in going direct to end consumers. Key developments include the continued enhancement of ChatGPT, particularly the pro plan and notably, the launch of a platform for users to create and list GPTs via chat/UI in the GPT Store. This raises intriguing questions: Could the GPT Store emerge as the next big app marketplace? And do consumers prefer centralized access to GPT functionalities via ChatGPT, or do they seek these capabilities integrated into their various existing apps?

Thanks for reading! If you liked this post, give it a heart up above to help others find it or share it with your friends.

If you have any comments or thoughts, feel free to tweet at me.

If you’re not a subscriber, you can subscribe for free below. I write about things related to technology and business once a week on Mondays.