This is a weekly newsletter about the business of the technology industry. To receive Tanay’s Newsletter in your inbox, subscribe here for free:

Hi friends,

With millions of ChatGPT is going to kill Google takes posted in the past few months, unsurprisingly as big tech earnings season got underway, the topic du jour was Generative AI.

Today, I’ll discuss what the big tech companies – Google, Facebook, Microsoft, Amazon, and Apple – had to say about their plans for Generative AI in their most recent earnings call last week.

I. Microsoft

Microsoft has arguably been the leader in the latest wave of LLMs and Generative AI, investing $10B+ into OpenAI and striking an exclusive partnership with them to be their cloud provider.

But let’s discuss what they’re up to more holistically.

The age of AI is upon us and Microsoft is powering it. - Satya Nadella

A. AI for developers

Microsoft made the Azure OpenAI service available broadly in January. Today, Azure seems best positioned to benefit from this wave of foundation models.

As customers select their cloud providers and invest in new workloads, we are well-positioned to capture that opportunity as a leader in AI. We have the most powerful AI supercomputing infrastructure in the cloud. It's being used by customers and partners like OpenAI to train state-of-the-art models and services, including ChatGPT. Just last week, we made our Azure OpenAI service broadly available, and already over 200 customers from KPMG to Al Jazeera are using it.

Azure will also be the exclusive cloud provider for OpenAI.

We will soon add support for ChatGPT, enabling customers to use it in their own applications for the first time. And yesterday, we announced the completion of the next phase of our agreement with OpenAI. We are pleased to be their exclusive cloud provider … All of this innovation is driving growth across our Azure AI services.

Perhaps Microsoft’s biggest benefit will be preparing its infrastructure ahead of time (though Google will have something to say about that) for this coming world:

That's, in some sense, under the radar, if you will, for the last three and a half, four years, we've been working very, very hard to build both the training supercomputers and now, of course, the inference infrastructure because once you use AI inside of your applications, it goes from just being training-heavy to inference.

B. AI for end users

Microsoft has made it clear they want to bring LLM-based AI capabilities into all of its apps.

GitHub Copilot is, in fact, you would say, the most at-scale LLM-based product out there in the marketplace today. And so, we fully expect us to sort of incorporate AI in every layer of the stack, whether it's in productivity, whether it's in our consumer services.

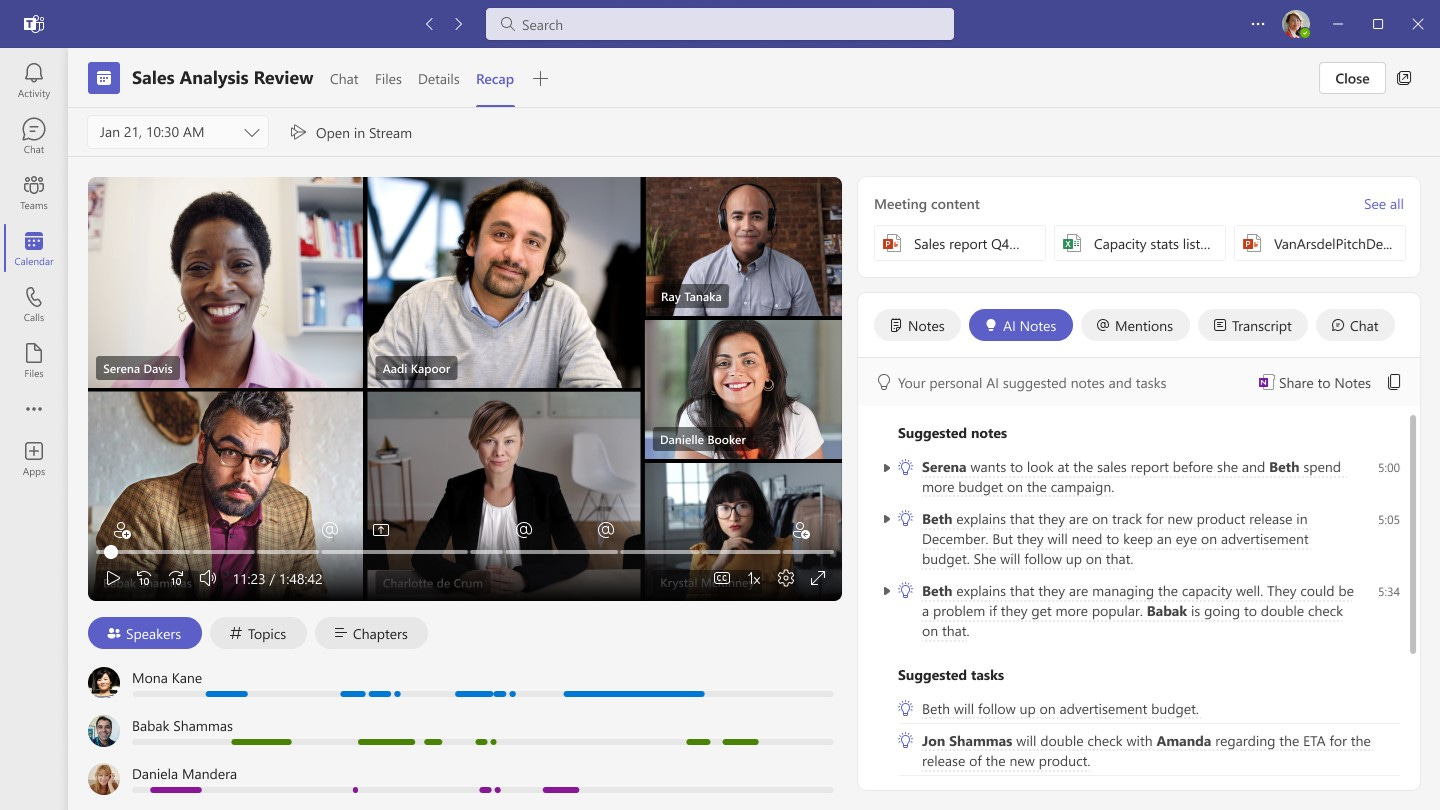

Teams get AI

We’ve started to see previews of some of this, with them recently showcasing Teams Premium’s AI features, including:

AI-generated notes and action items

AI-generated chapters

AI-generated markers

AI in Microsoft 365 is also coming

While not touched on specifically, Satya also all but confirmed that AI features would come to Excel, Powerpoint, Outlook, Word, etc. There were rumors in January that Microsoft was experimenting with this, and the hints in the earnings call more or less confirm it

Microsoft 365 is rapidly evolving into an AI-first platform that enables every individual to amplify their creativity and productivity with both our established applications, as well as new applications like Designer, Stream, and Loop.

C. OpenAI Partnership

Microsoft first partnered with OpenAI ~3 years ago, investing $1B (and potentially as much as $3B with further investment in 2021). Over the last few months, they deepened that partnership, reportedly with another $10B investment in a structure where they get 49% of profits back until they’ve been repaid (after other early investors are repaid first).

But this isn’t just a financial investment of course.

OpenAI uses Azure exclusively for all its training and inference including its released products (ChatGPT is also run on Azure)

Azure makes OpenAI available to enterprise customers via the Azure OpenAI service, capturing the cloud computing from these workloads.

Microsoft integrates OpenAI’s models into its applications and products (which to be fair, any company can do…)

Satya sums it up well:

So, we look to both, there's an investment part to it and there's a commercial partnership. But fundamentally, it's going to be something that's going to drive, I think, innovation and competitive differentiation in every one of the Microsoft solutions by leading in AI.

II. Alphabet

Google has been talking about itself as an AI-first company for ~6 years now, so its no surprise that it continued to be an important part of the discussion. But with many people suggesting that products like ChatGPT could be what finally disrupt Google, this call had a lot of discussions.

AI is the most profound technology we are working on today. Our talented researchers, infrastructure and technology make us extremely well-positioned as AI reaches an inflection point. - Sundar Pichai

A. AI for end users

A ChatGPT alternative called Bard is coming

Google has in the past talked about their model PaLM, the Pathways Language Model trained on 540B parameters, and LaMDA (Language Model for Dialogue Applications) in the context of research projects.

In their earnings call, they made it clear that they’ll make these models available so that people can start engaging with them, starting with LaMDA.

We’ve published extensively about LaMDA and PaLM, the industry’s largest, most sophisticated model, plus extensive work at DeepMind. In the coming weeks and months, we’ll make these language models available, starting with LaMDA, so that people can engage directly with them. This will help us continue to get feedback, test and safely improve them. These models are particularly amazing for composing, constructing and summarizing.

Sundar even hinted at an area that Google’s version might be better than ChatGPT today: being up-to-date and more factual.

They will become even more useful for people as they provide up-to-date, more factual information.

Today, they announced the system, detailing Bard, their ChatGPT alternative, and again highlighted that “We’ll combine external feedback with our own internal testing to make sure Bard’s responses meet a high bar for quality, safety and groundedness in real-world information”

Improved Google Search

Sundar also hinted that their most powerful language models will come to search in some experimental formats.

Language models like BERT and MUM have improved Search results for four years now, enabling significant ranking improvements and multimodal search like Google Lens. Very soon, people will be able to interact directly with our newest, most powerful language models as a companion to Search in experimental and innovative ways. Stay tuned.

AI in Docs, Mail, and Workspace

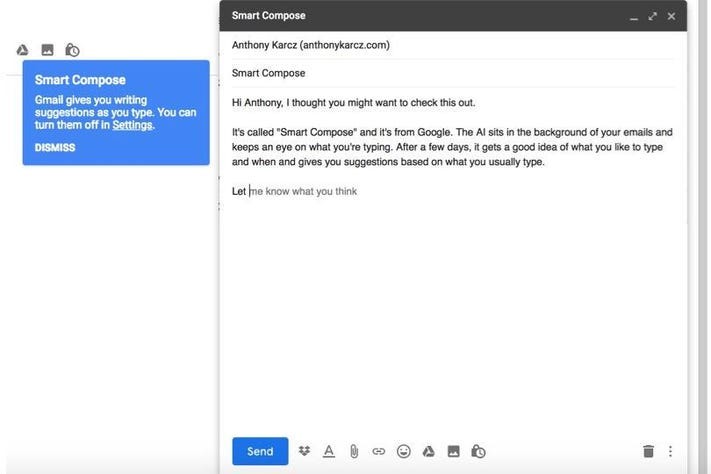

Google also confirmed they’ll be bringing LLMs to Gmail and Docs and other surfaces in Workplace. Features such as Smart Compose and others are coming soon. I wonder what this does to a lot of the text/email-based Generative AI startups.

Workspace users benefit from AI-powered features, like Smart Canvas for collaboration and Smart Compose for creation; and we’re working to bring large language models to Gmail and Docs. We’ll also make available other helpful generative capabilities, from coding to design and more.

B. AI for Developers

Not to be left behind in the cloud wars in the context of LLMs, Google will also be making its own language and multimodal AI models available to developers and strengthening its AI cloud platform.

We’ll provide new tools and APIs for developers, creators and partners. This will empower them to innovate and build their own applications, and discover new possibilities with AI, on top of our language, multimodal and other AI models.

Google Cloud is making our technological leadership in AI available to customers via our Cloud AI Platform, including infrastructure and tools for developers and data scientists, like Vertex AI.

C. AI for Advertisers

Google’s matching algorithms obviously already use a lot of AI. For example, LLMs are used to match advertisers to user queries, which can improve performance by up to 35% on certain campaigns.

But Google will also start using GenAI more heavily. To start, Google will be building it into their ad creative products, such as using AI to generative headlines, descriptions, etc.

We're excited to start testing our Automatically Created Assets Beta, which uses AI to generate headlines and descriptions for Search creatives seamlessly once advertisers opt in. – Philipp Schindler, CBO of Google

If you don’t yet receive Tanay's newsletter in your email inbox, please join the 5,000+ subscribers who do:

III. Meta

Amidst all the talk about the Metaverse, its easy to forget that Meta has also been investing in AI heavily, though mostly on the recommendations side thus far to power recommendations across its apps and advertising products. But they intend to also push in Generative AI, as Zuckerberg made clear on the earnings call.

Generative AI is an extremely exciting new area with so many different applications, and one of my goals for Meta is to build on our research to become a leader in generative AI in addition to our leading work in recommendation AI. - Mark Zuckerberg

In fact, Zuckerberg even highlighted that the two biggest themes for 2023 at Meta are: 1) efficiency and 2) generative AI work.

A. AI for end users

Zuck hinted that Meta is working on integrating LLMs and diffusion models into every one of its apps, for generating images, videos, avatars, and 3D assets. One can image things like image/video editing via prompts and image/video generation using people’s avatars (or faces) via prompts once the technology gets there. However, he was tight-lipped on the exact implementation in the apps.

We have a bunch of different work streams across almost every single one of our products to use the new technologies, especially the large language models and diffusion models for generating images and videos and avatars and 3D assets and all kinds of different stuff across all of the different work streams that we're working on.

Meta also highlighted the scaling challenges and their experimental/iterative approach to these. On their approach, Zuckerberg said:

So I think you'll see us launch a number of different things this year, and we'll talk about them, and we'll share updates on how they're doing. I do expect that the space will move quickly. I think we'll learn a lot about what works and what doesn't.

On scaling, Zuckerberg noted that reducing the cost of inference is important to bring this to the billions of users on their apps.

A lot of the stuff is expensive, right, to kind of generate an image or a video or a chat interaction. These things we're talking about, like cents or fraction of a cent. So one of the big interesting challenges here also is going to be how do we scale this and make this work more efficient so that way we can bring it to a much larger user base. But I think once we do that, there are going to be a number of very exciting use cases

B. AI for internal efficiency

Somewhat in passing, Zuckerberg mentioned that Meta will be “deploying AI tools to help our engineers be more productive.”

I read that as them potentially rolling out a Copilot-like product that is potentially fine-tuned or trained on their codebase internally to increase the efficiency of development, as well as adding AI into code review and other tools.

C. AI for advertisers

A lot of the core business uses AI heavily, but what about GenAI? While Meta wasn’t specific, they did indicate that they’re heavily investing in AI to build tools to make it easier for advertisers to create and deliver relevant and engaging ads. I took that to mean a hint that they’re working to bring some of the generative AI capabilities of images and video for asset creation for advertisers.

We’re investing heavily in AI to develop and deploy privacy-enhancing technologies and continue building new tools that will make it easier for advertisers to create and deliver more relevant and engaging ads

IV. Amazon

There were no mentions of AI at all on Amazon’s recent earnings call. Given the emphasis Azure and GCP are placing on AI workloads, I’m surprised Andy didn’t mention anything specifically nor was asked about the plans there.

The one highlight in the earnings report on the customer front was that Stability AI has chosen AWS as their preferred cloud provider.

Stability AI selected AWS as its preferred cloud provider to build and train artificial intelligence (AI) models for the best performance at the lowest cost.

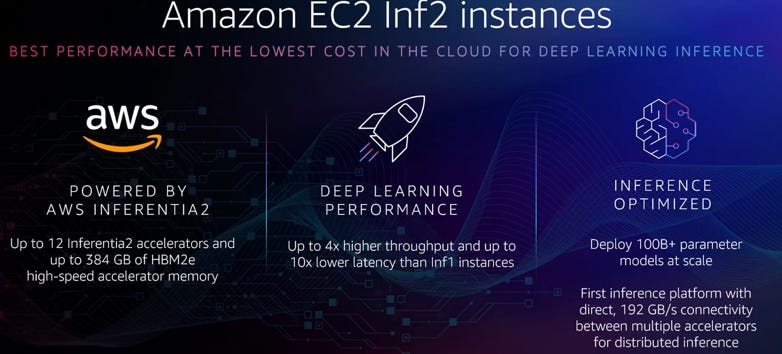

Similarly, on the product front, the one thing called out was the Inf2 instances for low latency and low cost ML inference.

Announced … Inf2 instances, powered by AWS Inferentia2 chips, which deliver the lowest latency at the lowest cost for ML inference on Amazon EC2

V. Apple

Apple was pretty tight-lipped on their exact plans but did note that AI is their major focus when prodded and that it would affect every product in every service they have. I wouldn’t expect any major changes anytime soon though, judging by the need for them to be asked about AI to mention it.

Yep. It [AI] is a major focus of ours. It's incredible in terms of how it can enrich customers' lives. And you can look no further than some of the things that we announced in the fall with crash detection and fall detection or back a ways with ECG.

I mean these things have literally save people's lives. And so we see an enormous potential in this space to affect virtually everything we do. It's obviously a horizontal technology, not a vertical. And so it will affect every product in every service that we have. – Tim Cook

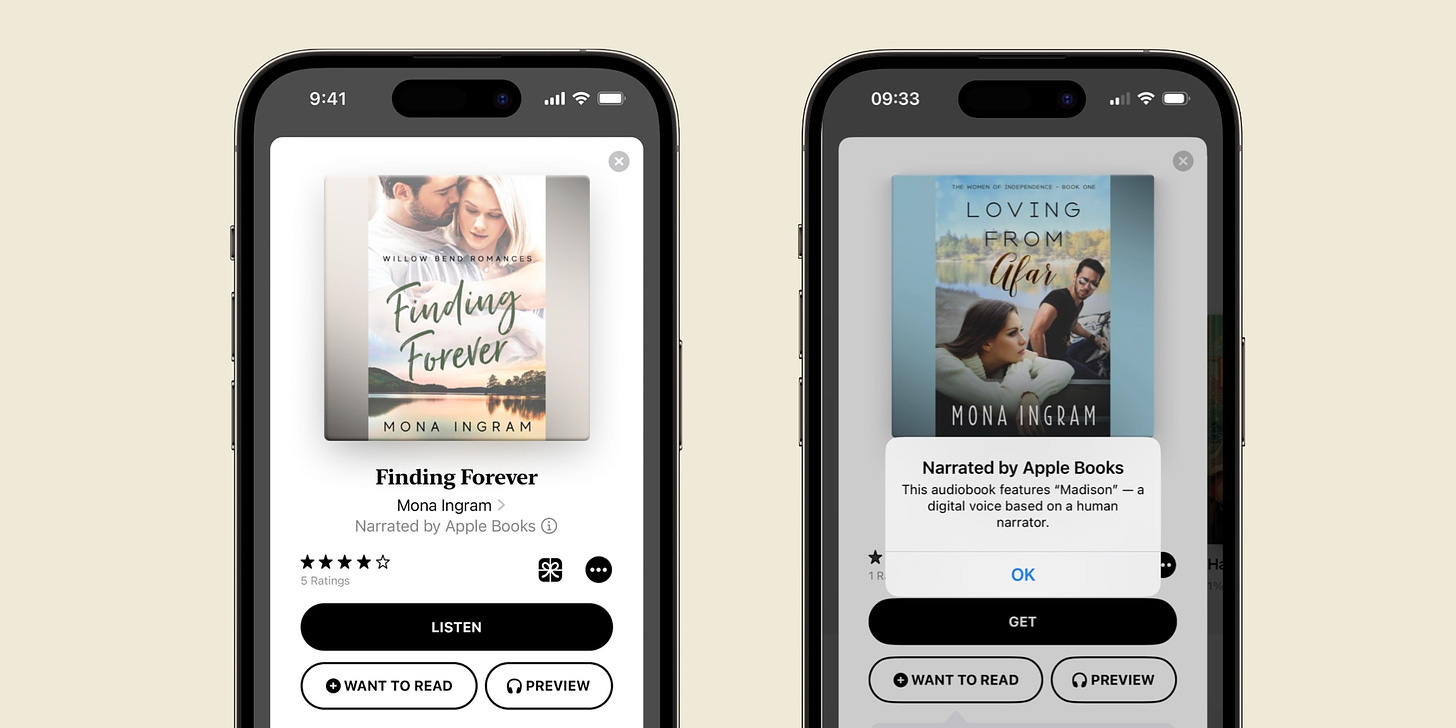

One feature they did launch recently was AI-narrated audiobooks, which could indicate some of the kinds of ways they intend to integrate AI into apps.

Thanks for reading! If you liked this post, give it a heart up above to help others find it or share it with your friends.

If you have any comments or thoughts, feel free to tweet at me.

If you’re not a subscriber, you can subscribe for free below. I write about things related to technology and business once a week on Mondays.

This is a really good roundup!

Thanks for this comparative post. Tesla is in the arena as well. Is Tesla AI solely FSD training focused? I thought I recalled them talking about opening up to outside clients.